Recently, Facebook rolled out its updated A/B testing tool, also known as Split testing, in Belgium. The update to split testing brings a fresh view on how advertisers can further improve their ad and campaign performances. This by basing themselves on data-driven insights. In the article we will take a closer look at the update, how it differs from previous A/B testing methods and how to make optimal use of the tool.

What is split testing (A/B testing)?

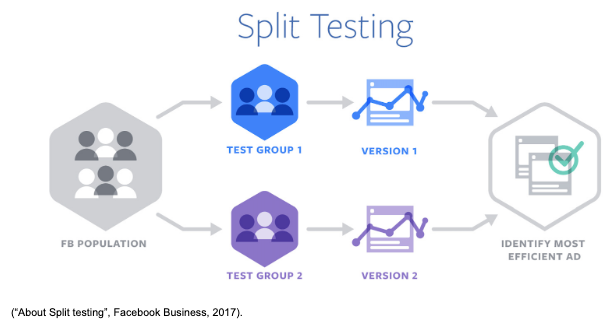

Split testing allows the advertiser to measure the impact of different variables on their ad and ultimately campaign performances. This can be achieved by measuring results of an identical ad being shown to different self-defined target groups or test groups as seen in the picture below.  A/B testing starts off by identifying a variable on which a test should be conducted followed by defining KPIs on which results should be measured. Variables can range from difference in targeting (socio-demo, contextual, interest, location, ..) to platform placements and delivery optimization. More on this later.

A/B testing starts off by identifying a variable on which a test should be conducted followed by defining KPIs on which results should be measured. Variables can range from difference in targeting (socio-demo, contextual, interest, location, ..) to platform placements and delivery optimization. More on this later.

Based on the chosen variable, two (or three) separate audiences are created.. Both groups will be shown the same ad which allows for an accurate data-driven comparison. on which afterwards results can be compared and the best performing audience can be identified.

In order to maximize the effectiveness of your A/B test, only one variable should be tested at a time. This allows the advertiser to isolate the impact of the chosen variable on the ad’s performance.

Setting up your A/B test on Facebook

Split test setting

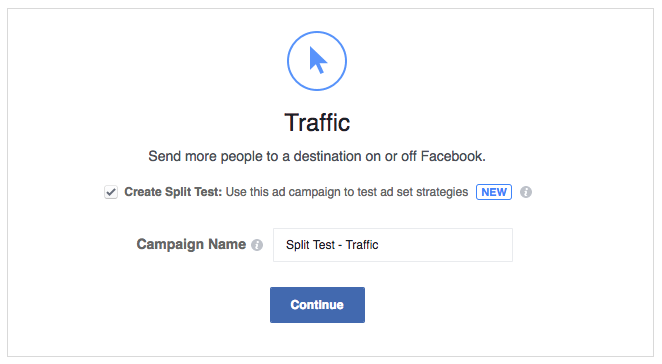

Split tests can be activated by checking the “create split test” checkbox in the campaign creation screen. Currently split testing is available in a vast majority of campaign types, such as the most important ones being reach, website traffic, conversions and app installs.

After having selected the campaign type, at ad set level, the advertiser can now opt into the different predefined A/B testing options.

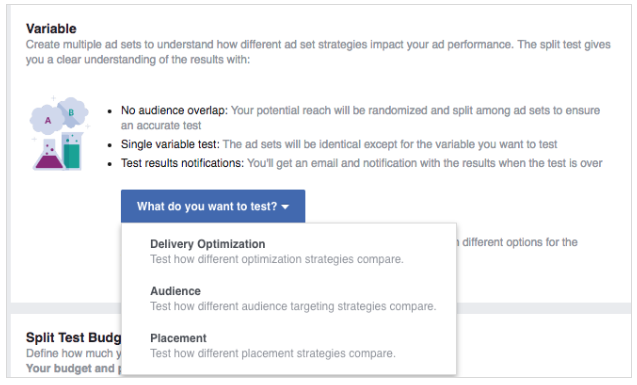

A/B tests can currently be run on 3 variables, as seen below, which are all situated on the ad set level. Differences can be tested based on audiences, optimization methods and lastly, placements. We will take a closer look at these here.

- Audience

Audience based split testing allows you to test performances on two audiences with each audience containing a different targeting. Targeting tests can be almost limitless in possibilities such as for example socio-demo targeting, interest targeting but also location.

By testing different groups, an advertiser can identify their top performing audiences driving the for example the highest conversion at the lowest cost. An example of a test could be based on e.g. location targeting, where we will be looking at how performances differ in people living in one area vs another.

Important to note, audience A/B Testing is currently only available for saved audiences created in Facebook. Custom audiences, such as an advertiser’s remarketing audience or email lists, however should follow later. - Delivery optimization Delivery optimization allows an advertiser to test their different optimization methods. Say an advertiser is running a campaign with the goal of driving new conversions. A potential A/B test could be set up to look at the difference in performance between on the one hand optimizing the campaign for the lowest cost per conversion (maximizing conversions for the given budget) and on the other hand optimizing for the lowest cost per click (sending maximum people to your website where they can convert).

- Placement Lastly, an A/B test can be run on the choice of placement. A classic placement test is for example Facebook news feed vs Instagram news feed. Through a split test an advertiser can determine whether their ad performs better on the former or the latter platform.

After having chosen your A/B test setting, there are a few more points to keep in mind:

- Budget and test duration

A budget & testing period should be selected. Your budget, which will be a lifetime and not daily budget, can either be split evenly or weighted. By using a weighted split Facebook allows you to allocate a larger proportion of your budget (and thus potential reach) towards your most important test group. This is for example useful when testing audiences that differ significantly in terms of potential reach size.

Further, defining your test duration, Facebook recommends using a period between 3 to 14 days. This to allow for sufficient data to be gathered, improving the overall quality of your test. - Ad creation

As a final step, ad creatives will need to be implemented. This however is no different from your standard ad creation. At this point in time, the split testing option on Facebook does not yet cover A/B testing on ad creatives. All tests mentioned above focus on ad set level variables using only 1 ad creative which is pushed to 2 different test groups.

Test on creatives can however be done by either setting up ads in one single ad set, which will aggressively optimize based on the best performing creative, or by setting up 2 identical ad sets containing each one version of the ad. This allows for a more evenly and controlled test setting.

How does this differ from the current way of A/B testing?

One of the most common problems many advertisers are facing currently with A/B tests is overlap in audiences which skewes results to some extend. Especially in the case of A/B testing on placements or delivery methods. Since these are pushed to the same audience, it can occur that a single person will be placed into the 2 test groups. An example here could be one person being shown the ad on both Facebook and Instagram during A/B placement test.

Using the split test feature, Facebook will randomize the potential reach over the 2 given test groups to prevent potential overlap from occurring.

In summary

By offering a predefined setting for A/B testing, Facebook encouraged advertisers to further optimize and improve the quality and results of their digital campaigns. Using the 3 split testing options and data gathered from these tests, advertisers can gain insights and define learnings in order to achieve the best performances.